Best practices

Short habits that make Copilot work well. Each links to a longer article if you want the full story.

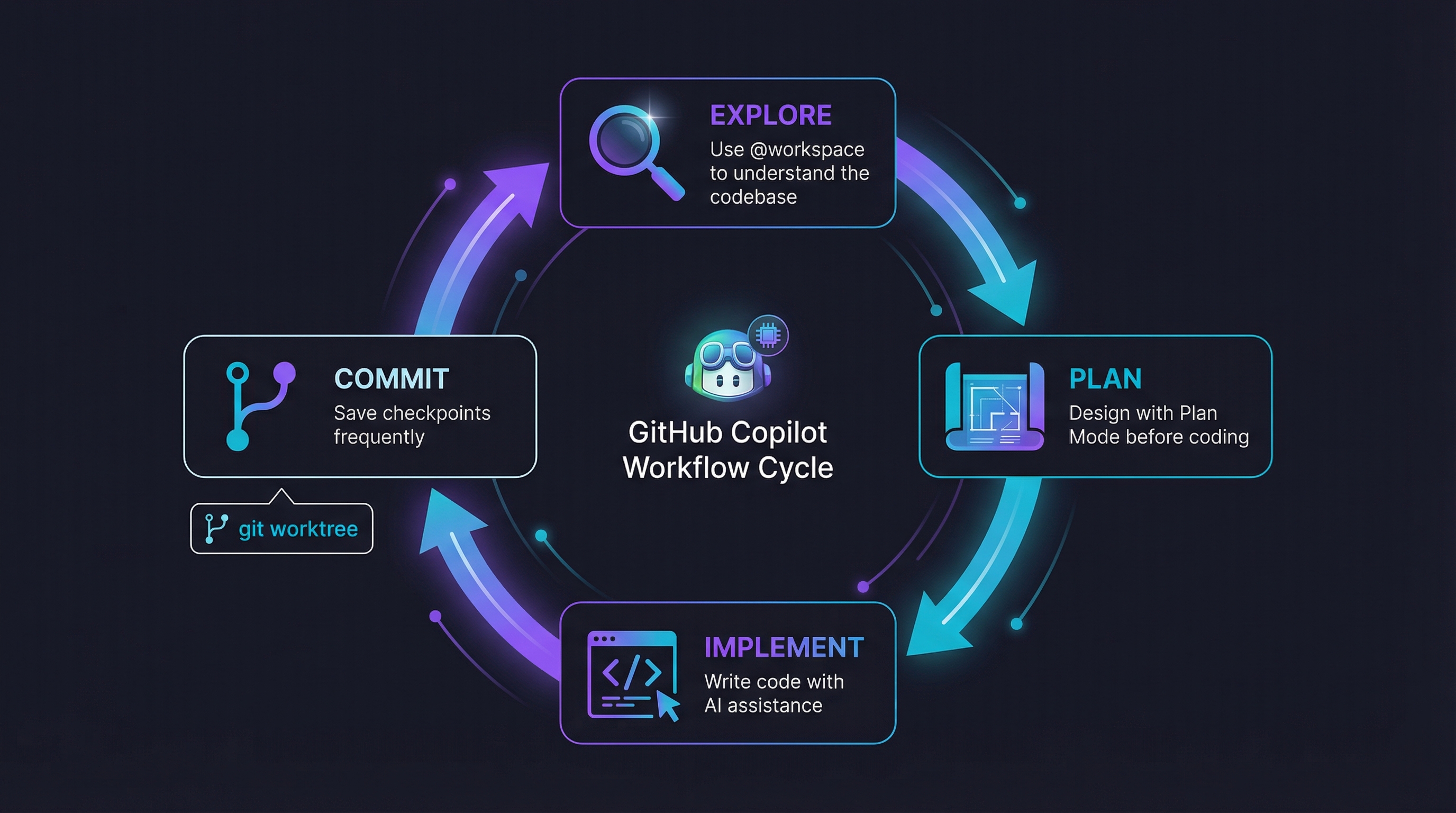

Workflow

Plan before you code. Use @workspace to explore, switch to Plan Mode to design, implement, commit. Commits are checkpoints — make them often. git worktree helps when you're juggling branches.

Brainstorm with structure. Tell Copilot exactly how you want output: "give me 3 options, compare pros and cons in a table." The pros-and-cons prompt automates this. See prompt engineering for more on shaping responses.

Spec-driven for greenfield work. For new projects or PoCs, start from a spec. The spec agent interviews you, builds a plan, and hands it off. spec-kit adds task tracking on top.

Context

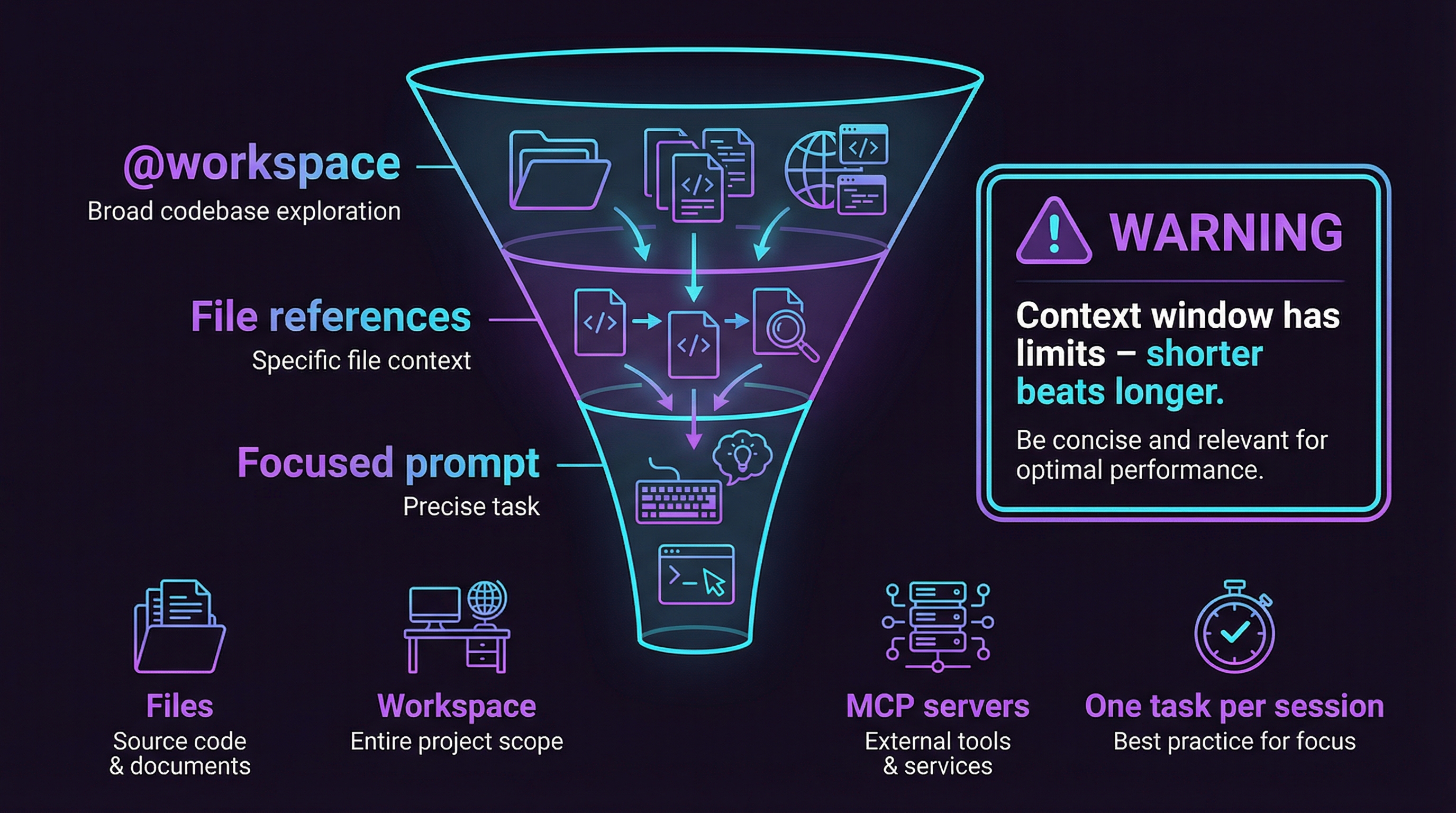

This is the single biggest lever. Copilot can only work with what it can see.

- Feed it relevant files with

#file:references — don't make it guess - Use

@workspacefor broad exploration, then narrow down - Pull in external knowledge via MCP servers when built-in context isn't enough

- One task per chat session. Start fresh when you switch topics — stale context degrades quality fast

The full playbook is in context engineering. Read that one.

Context windows have hard limits. Shorter and more focused beats longer and comprehensive every time.

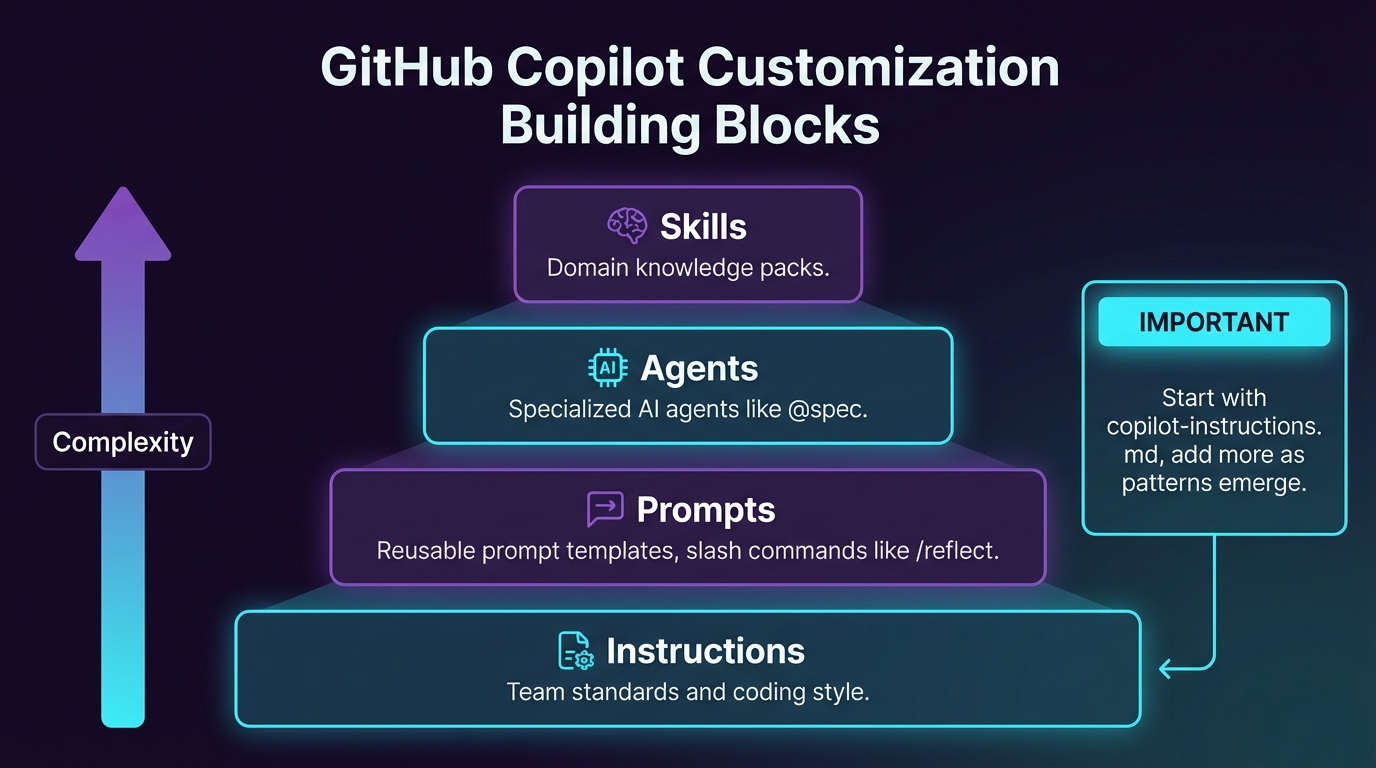

Customizations

Copilot is configurable through instructions, prompts, agents, and skills. If you find yourself repeating the same guidance or correcting the same mistakes, encode it. That's the whole point.

Start with a copilot-instructions.md for team standards. Add .instructions.md files for specific concerns (testing conventions, coding style). Build prompts and agents as patterns emerge from your daily work.

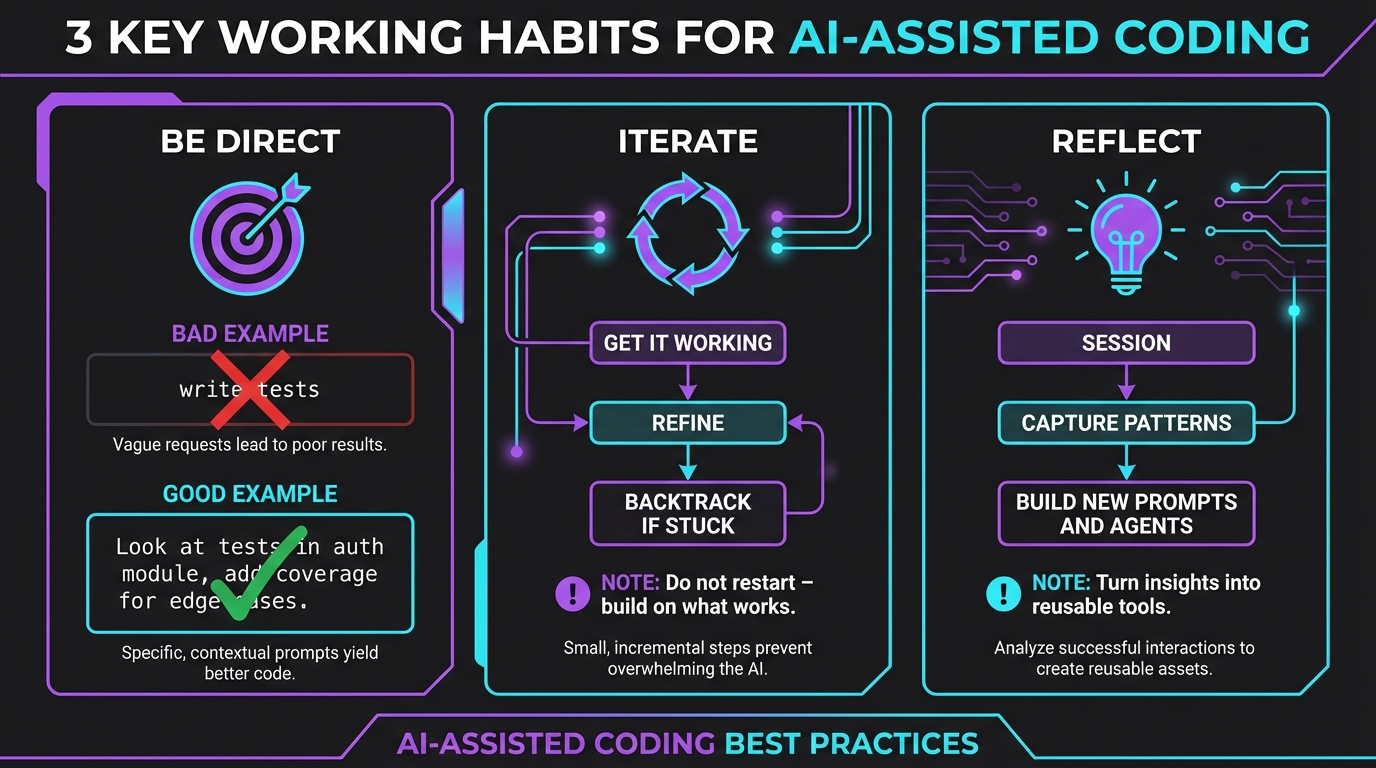

Working habits

Be direct. Vague prompts get vague answers. State constraints, mention the tech stack, point to examples in your codebase. "Look at the tests for this module" beats "write tests."

Iterate, don't restart. Get something working first, then refine. If it's going sideways, backtrack — don't keep prompting on a broken thread.

Reflect. After a session, run /reflect to capture what worked and what didn't. Patterns you notice can become prompts, agents, or skills. See session reflection for the full workflow.